1

/

of

8

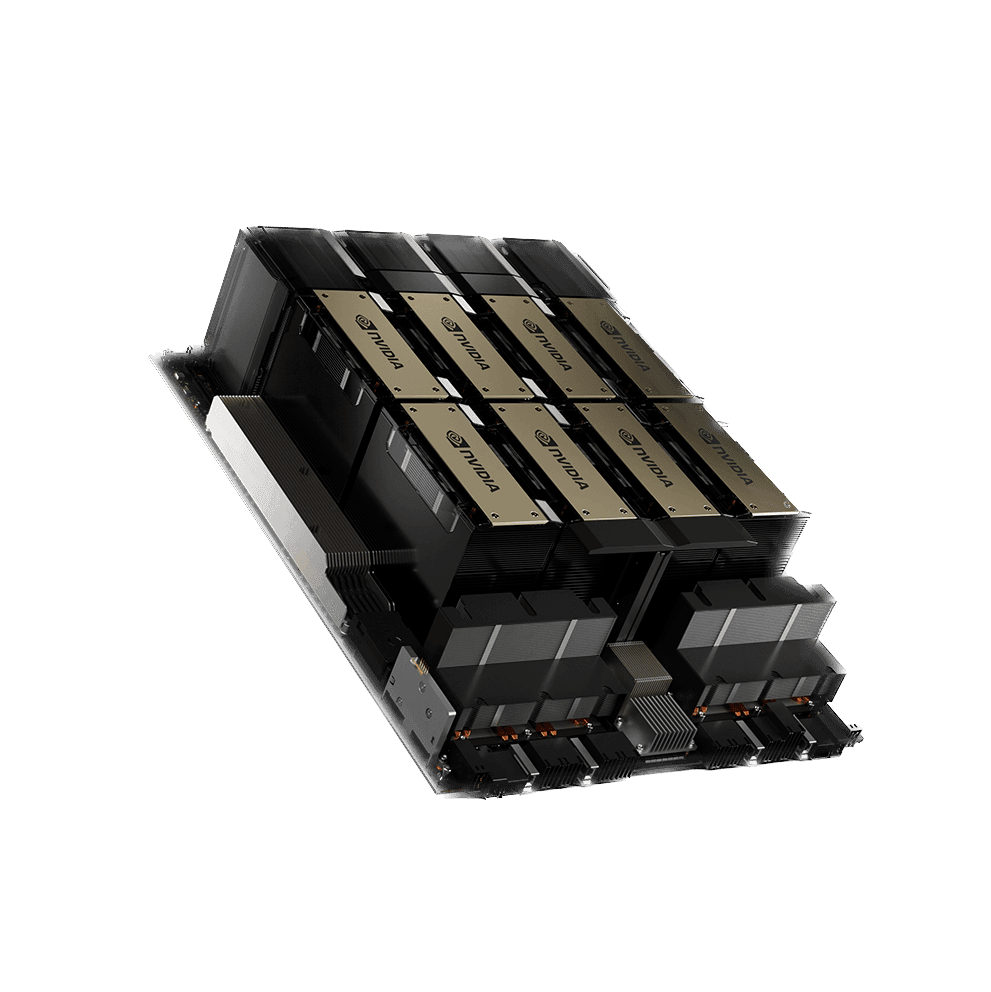

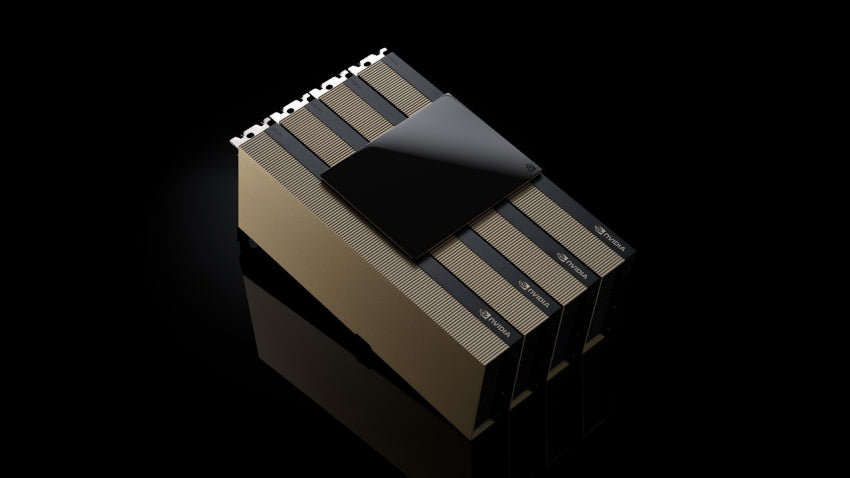

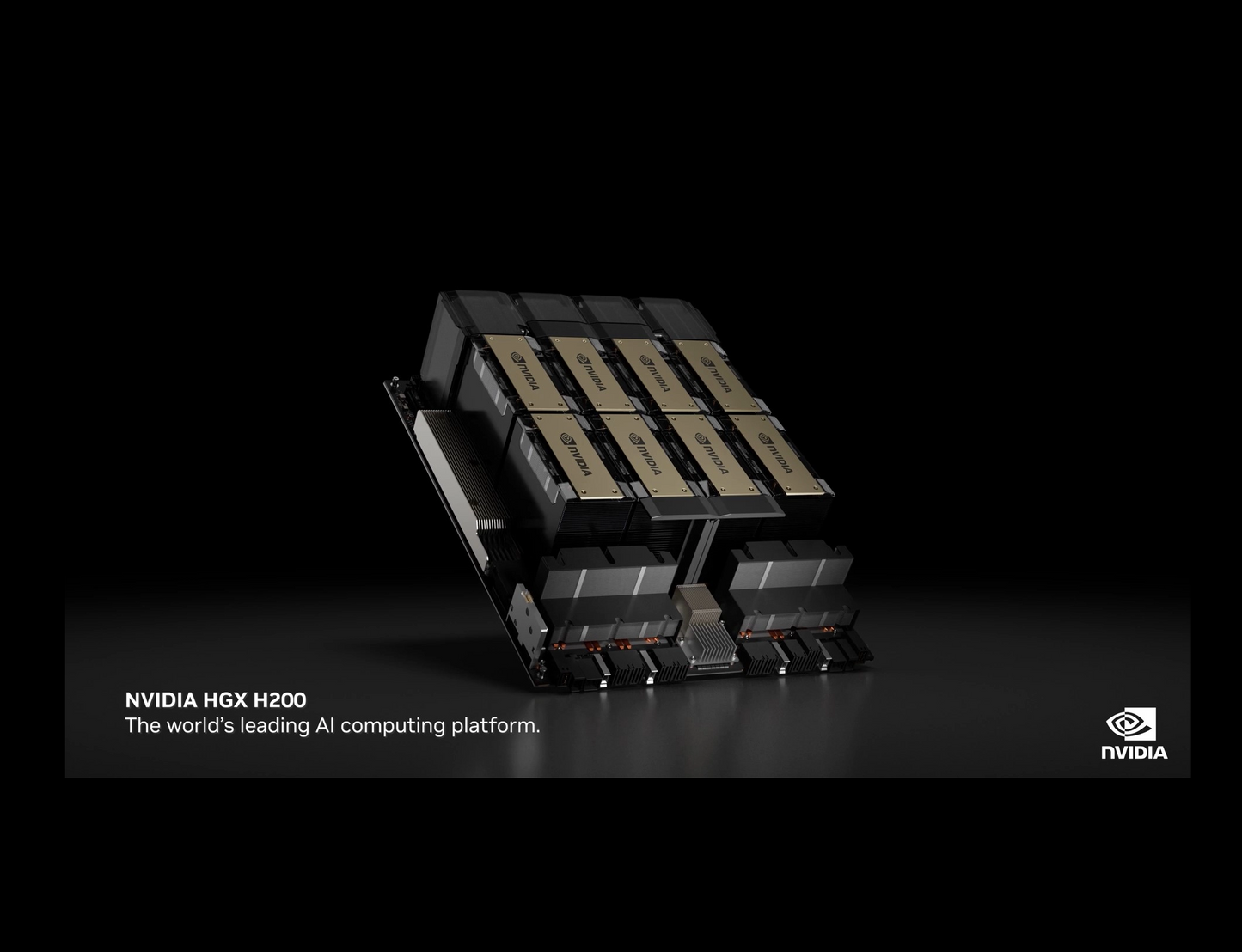

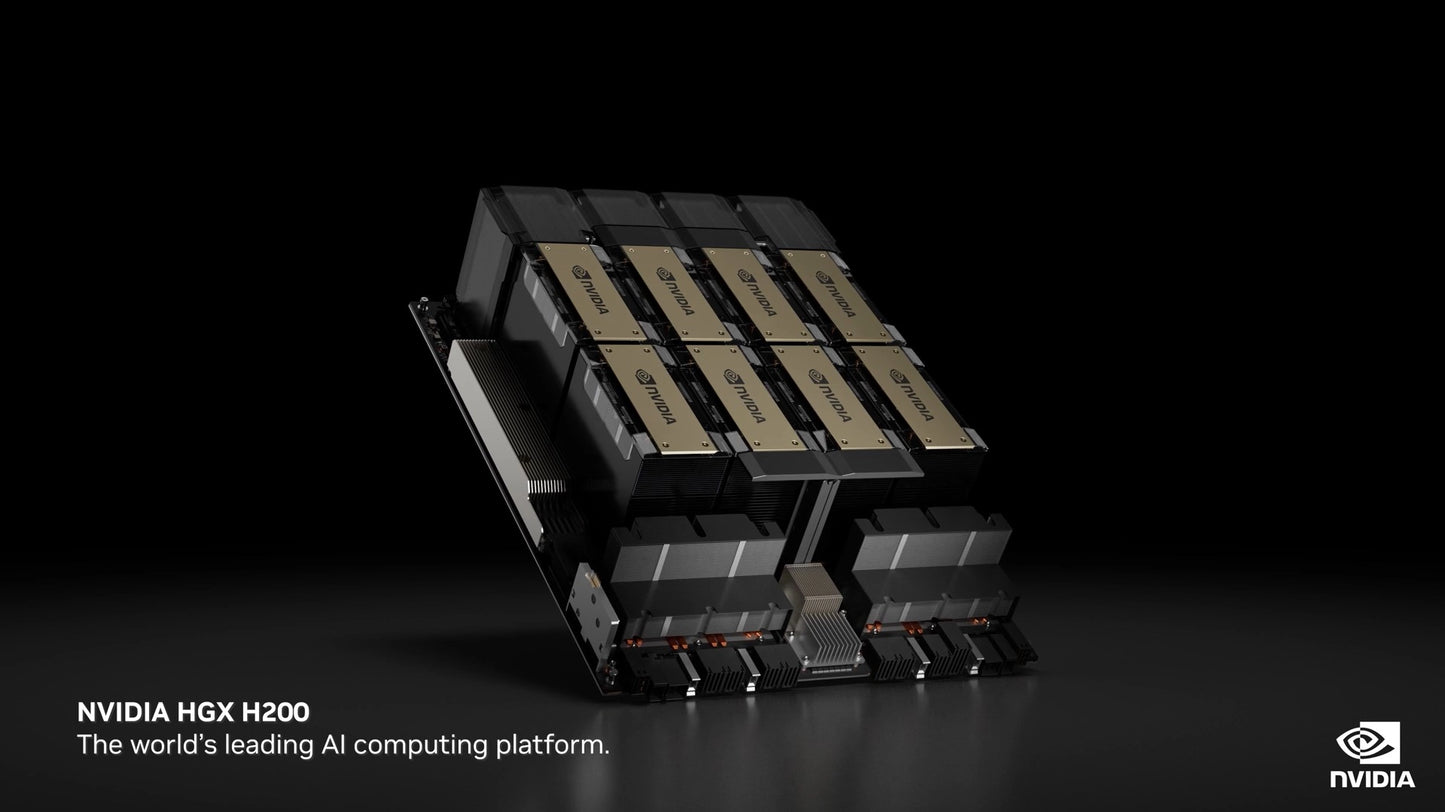

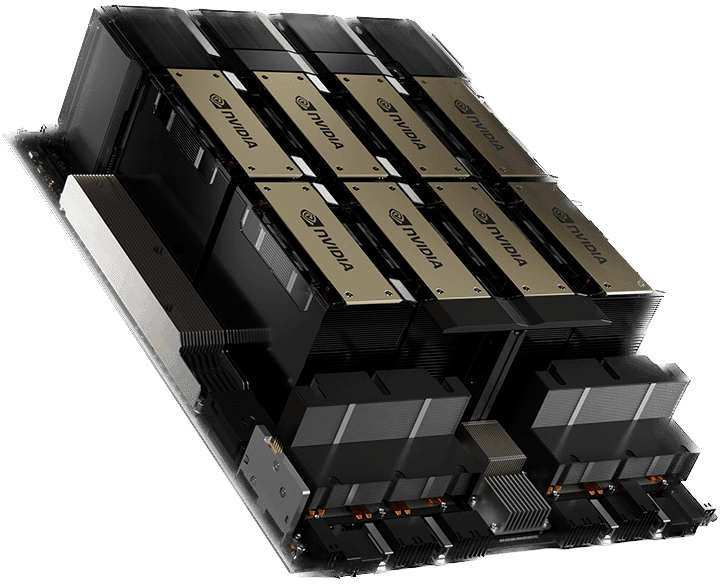

NVIDIA H200 Tensor Core GPU (NVL/SXM)

NVIDIA H200 Tensor Core GPU (NVL/SXM)

In stock

Regular price

£24,500.00 GBP

Regular price

£32,050.00 GBP

Sale price

£24,500.00 GBP

Unit price

/

per

All Taxes & Free Shipping applied at Checkout.

Couldn't load pickup availability

NVIDIA H200 Tensor Core GPU (NVL/SXM)

Higher Performance With Larger, Faster Memory

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-

performance computing (HPC) workloads with game-changing performance

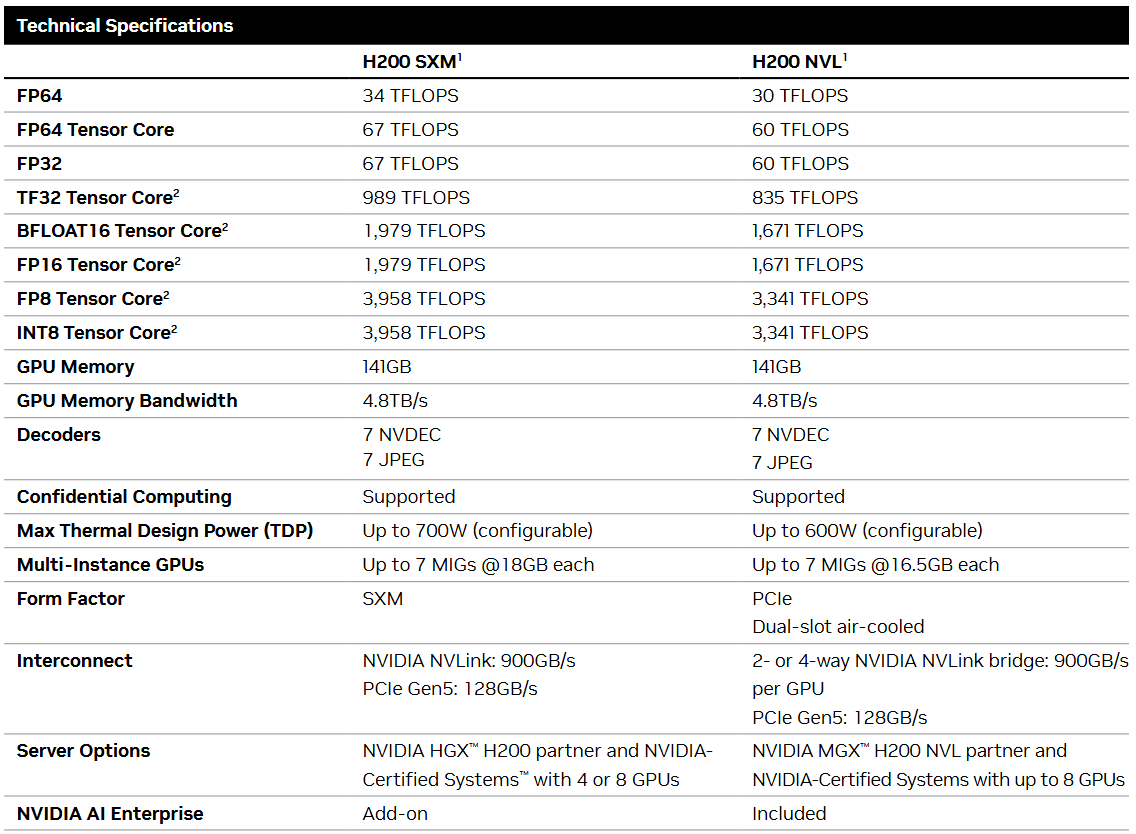

and memory capabilities. Based on the NVIDIA Hopper™ architecture, the NVIDIA H200 is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second (TB/s)— that’s nearly double the capacity of the NVIDIA H100 Tensor Core GPU with 1.4X more memory bandwidth. The H200’s larger and faster memory accelerates generative AI and large language models, while advancing scientific computing for HPC workloads with better energy efficiency and lower total cost of ownership.

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-

performance computing (HPC) workloads with game-changing performance

and memory capabilities. Based on the NVIDIA Hopper™ architecture, the NVIDIA H200 is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second (TB/s)— that’s nearly double the capacity of the NVIDIA H100 Tensor Core GPU with 1.4X more memory bandwidth. The H200’s larger and faster memory accelerates generative AI and large language models, while advancing scientific computing for HPC workloads with better energy efficiency and lower total cost of ownership.

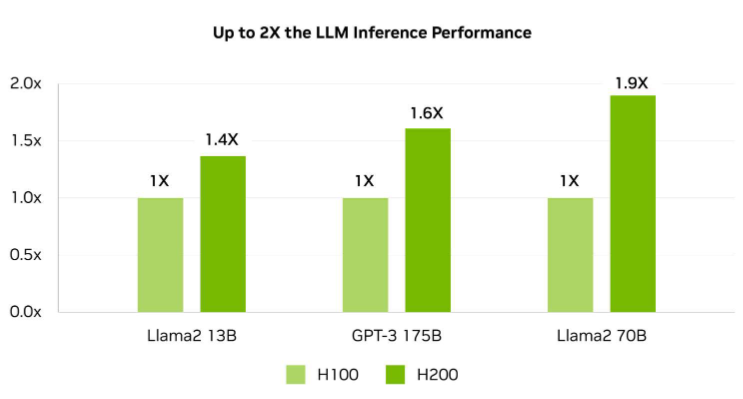

Unlock Insights With High-Performance LLM Inference

In the ever-evolving landscape of AI, businesses rely on large language models to

address a diverse range of inference needs. An AI inference accelerator must deliver the highest throughput at the lowest TCO when deployed at scale for a massive user base. The H200 doubles inference performance compared to H100 GPUs when handling large language models such as Llama2 70B

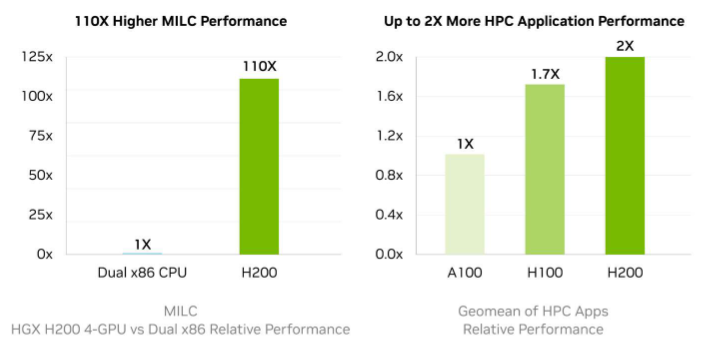

Supercharge High-Performance Computing

Memory bandwidth is crucial for HPC applications, as it enables faster data

transfer and reduces complex processing bottlenecks. For memory-intensive

HPC applications like simulations, scientific research, and artificial intelligence,

the H200’s higher memory bandwidth ensures that data can be accessed and

manipulated efficiently, leading to 110X faster time to results.

Memory bandwidth is crucial for HPC applications, as it enables faster data

transfer and reduces complex processing bottlenecks. For memory-intensive

HPC applications like simulations, scientific research, and artificial intelligence,

the H200’s higher memory bandwidth ensures that data can be accessed and

manipulated efficiently, leading to 110X faster time to results.

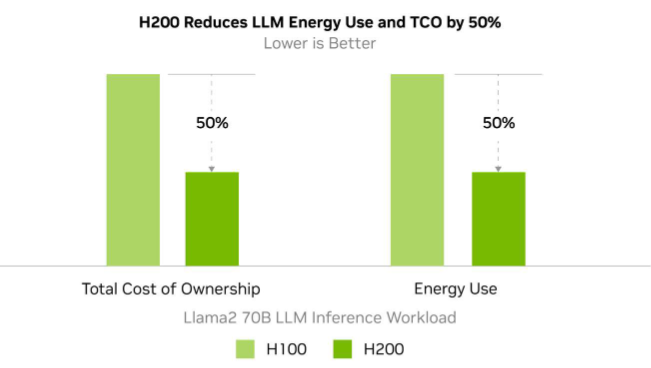

Reduce Energy and TCO

With the introduction of H200, energy efficiency and TCO reach new levels. This

cutting-edge technology offers unparalleled performance, all within the same power profile as the H100 Tensor Core GPU. AI factories and supercomputing systems that are not only faster but also more eco-friendly deliver an economic edge that propels the AI and scientific communities forward. Preliminary specifications. May be subject to change. Llama2 70B: ISL 2K, OSL 128 | Throughput | H100 SXM 1x GPU BS 8 | H200 SXM 1x GPU BS 32

With the introduction of H200, energy efficiency and TCO reach new levels. This

cutting-edge technology offers unparalleled performance, all within the same power profile as the H100 Tensor Core GPU. AI factories and supercomputing systems that are not only faster but also more eco-friendly deliver an economic edge that propels the AI and scientific communities forward. Preliminary specifications. May be subject to change. Llama2 70B: ISL 2K, OSL 128 | Throughput | H100 SXM 1x GPU BS 8 | H200 SXM 1x GPU BS 32

Product features

Product features

Materials and care

Materials and care

Merchandising tips

Merchandising tips

Share